Today was our group test. I let students choose their own groups. I'm not sure whether this was a good idea or not. It seemed that some students weren't really sure who to work with. Others had a good sense but I had said that the group must be three people. This meant that those hoping to be a group of four had to decide who was going to leave. Not doing random groups today made me really appreciate doing random groups.

Each group received the test and started working on their whiteboards. I had each group start at a different question. Some groups were very efficient and others really seemed to have a hard time making headway. I went around and did lots of listening and asked if students could clarify their thinking for me.

It was pretty obvious that many students had not studied for the test. There were lots of incorrect assumptions being made along with lots of simple errors. I really like the group test because of all the discussion and learning that is taking place. I also like the fact that I have some conversations (which I recorded today) that I can use to supplement (one way or the other) a student's test results. What I don't like about this extra information is that I don't have a clean way to count it.

I struggle with how to assess the group test (and maybe it just needs to be formative). I don't want to penalize a student who learned a ton between the group test and the individual test. I also don't want students to feel like they did well on the group test so they don't need to prepare for the individual test. In any case, I do have a record of the conversations along with photos of all the work that I can use to help in making an overall judgement.

The plan for tomorrow was to do the individual test but I don't think my students are ready for that yet.

Showing posts with label Assessment. Show all posts

Showing posts with label Assessment. Show all posts

Tuesday, December 5, 2017

Wednesday, November 8, 2017

MPM1D1 - Day 44 Test Day

We had our second test today. Students seemed well prepared in general. I had a few say to me as they came in that they were going to fail, but this seems to be a normal approach for these students (which is kind of sad). It turns out they did alright.

I was very pleased with how well most did on the question about finding the equation of a line between two points. I often talk about this being the most difficult part of the course, but many of them said that they found it easy.

There are a few things that we need to work on, mostly number sense (exponents and solving equations) but we have lots of time to come back to these ideas. I'm also questioning how I assess. The test is a great place to assess the thinking, problem solving and communication skills but I'm wondering if smaller, more frequent (formative?) quizzes might be a better option for assessing knowledge. What I mean by this is that most students seem to be able to solve an equation when given a context and as part of a bigger problem. They seem to be able to reason their way to the answer and they can check to see that it make sense. But, if I strip away the context and ask them to just solve a two step equation, a good number of them will struggle. We'll keep plugging away at it.

I was very pleased with how well most did on the question about finding the equation of a line between two points. I often talk about this being the most difficult part of the course, but many of them said that they found it easy.

There are a few things that we need to work on, mostly number sense (exponents and solving equations) but we have lots of time to come back to these ideas. I'm also questioning how I assess. The test is a great place to assess the thinking, problem solving and communication skills but I'm wondering if smaller, more frequent (formative?) quizzes might be a better option for assessing knowledge. What I mean by this is that most students seem to be able to solve an equation when given a context and as part of a bigger problem. They seem to be able to reason their way to the answer and they can check to see that it make sense. But, if I strip away the context and ask them to just solve a two step equation, a good number of them will struggle. We'll keep plugging away at it.

Monday, September 25, 2017

MPM1D1 - Day 15 Desmos Intro & First Assessment

We started the class by revisiting Hula Hoop Relay. I had a set of Chromebooks and we made our way to Desmos. This would be our first use of Desmos. We had a few password hiccups and a couple of network issues but they were fairly easily sorted out.

I demonstrated how to create a table, adjust the scale and find the equation of the line of best fit. I used a group's set of data to demonstrate. As it turns out the slope and y-intercept had the same absolute value. What an unfortunate and potentially confusing coincidence. The potential was there to dive into expanding and factoring binomials, but it wouldn't serve the purpose for today's lesson so I let it go. We talked about how we could find how long it would take for 43 people to do the challenge. We discussed how we could use the graph to extrapolate and how we could use the equation. It was a nice link between the graph, the equation and what was really going on. We did it both ways and some students seemed surprised that everything matched up.

As a result of our Desmos introduction I felt as though some students would be able to do it on their own while many would need a little more practice. There will be plenty of opportunity for more practice.

We put the computers away and did the mid-cycle assessment (using the term mid very loosely). A couple of students seemed really worried about how they were going to do. I told them to do their best as it would give them a sense of what they needed to work on for the test at the end of the cycle. This is our first real assessment and I intend for it to be formative. I'm hoping it will provide students with some feedback on what they need to work on. It will also give me a chance to see if there is any particular topic that we need to revisit.

I demonstrated how to create a table, adjust the scale and find the equation of the line of best fit. I used a group's set of data to demonstrate. As it turns out the slope and y-intercept had the same absolute value. What an unfortunate and potentially confusing coincidence. The potential was there to dive into expanding and factoring binomials, but it wouldn't serve the purpose for today's lesson so I let it go. We talked about how we could find how long it would take for 43 people to do the challenge. We discussed how we could use the graph to extrapolate and how we could use the equation. It was a nice link between the graph, the equation and what was really going on. We did it both ways and some students seemed surprised that everything matched up.

As a result of our Desmos introduction I felt as though some students would be able to do it on their own while many would need a little more practice. There will be plenty of opportunity for more practice.

We put the computers away and did the mid-cycle assessment (using the term mid very loosely). A couple of students seemed really worried about how they were going to do. I told them to do their best as it would give them a sense of what they needed to work on for the test at the end of the cycle. This is our first real assessment and I intend for it to be formative. I'm hoping it will provide students with some feedback on what they need to work on. It will also give me a chance to see if there is any particular topic that we need to revisit.

Sunday, October 16, 2016

When The Class Bombs A Test

|

| Photo by Wendy Berry |

Last Thursday I gave a test to my grade 12 Advanced Functions class. It was our first test of the year and the results were disastrous. My first hint that things may not go well came the day before the test.

Typically the day before a test students in this class keep me busy for what seems like every minute of the day. Some will come in before school and ask questions. Some will sit in my room during their spares and work so they can ask questions. There's usually a flurry of activity at lunch with students working in groups at their desks or at the board. My prep period becomes an impromptu tutorial for students lucky enough to have a spare at the same time. This year, I had a student or two at lunch time and one who stopped by for a few minutes during my prep period.

While students wrote the test I could tell things weren't going well. Some were taking the long way around for a lot of the questions, which meant they would need extra time (apologies to their period two teachers). Many of them seemed to be struggling. A number of them asked if I could drop this test mark or if we could do a rewrite as they handed the test in. Not a good sign.

I wasn't going to look at the tests that night, but I was curious to see what the results were actually like. My suspicions about the class as a whole doing poorly were confirmed. The marks were terrible. I spent a lot of time thinking about why they were so bad. How much of it was failure on my part? How much did they need to take ownership for? How could we rectify the poor result?

Without having enough time to come up with a solid plan of what to do next I decided that my number one priority had to be for students to master the content. The next day I put them in groups of three and gave each group a copy of the test. I had the groups work through the test at the boards. I was able to circulate, listen to the conversations and provide some leading questions when they were needed. There were some great discussions, problems solving and peer teaching going on. I think most students learned at least a little and some learned a lot.

The logical thing to do seems to be to have a retest. Past experience has shown me that the results from retests are generally not all that much better than the original test. Students have good intentions but then run out of time to prepare so their marks improve very little. I think that walking through the tests in groups helped but they won't be writing the test in groups. To help ensure every student who rewrites the test is well prepared I have decided that I will meet with each of them to go over their test. I will ask questions that will help identify what they know and what they need work on. Hopefully this gives them a list of topics that they should go over in preparation for the test.

It seems like a lot of work but I'm hopeful it will pay off. What strategies do you use when tests or other forms of assessment don't go the way you expected?

Sunday, June 7, 2015

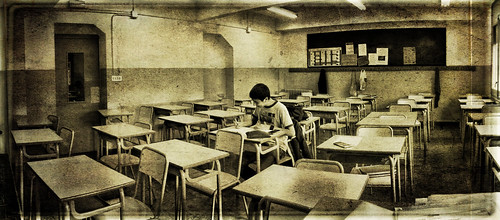

Are Exams Useful?

|

| Solo Exam By: Xavi |

I think for those that are interested in what Mr. Cooper said, there's no reason why the conversations about assessment can't start now. I had one such conversation with a colleague the other day. He told me that as he started to put his exams together he wondered "Why?". His point was that if students had already been assessed on certain expectations, what was the point of assessing them again on the same topics. I thought this was a valid point. We discussed it for a short period between classes but I think it's a discussion that could have gone on.

What more can you learn about a student's learning in an hour and a half to two hour exam? One of the options we discussed is the possibility of having targeted exams so that students could show you that they have improved on a topic that they didn't master in the term. This could be as complicated as individualized exams (which would be a lot of work for the teacher) or as simple as giving everyone the same exam and giving each student a list of questions that they must complete on that exam. The goal would be for students to show the teacher that they have gained the understanding that they lacked earlier in the semester. If you decided to go this route you'd have to be a little careful with the weighting. In Ontario 70% of a student's grade is to come from their term work while the remaining 30% comes from a combination of exam and/or summative activity. You wouldn't want 30% of a student's mark to be determined by questions that they struggled with early on. But with a little tweaking I think this could be an effective approach.

Another option we discussed was to make the 'exam' a reflection for the student. It might be a written exam or perhaps in the form of an interview as described here by Alex Overvijk. A reflection might involve questions such as: What was the most useful topic we covered? What was the most difficult? How do you see using any of the ideas from the course beyond the course? I'm sure there are a lot more, many that would be much better than these but the idea would be to get students thinking and reflecting about their learning.

One final option that we discussed (I'm sure there are many others) was moving away from an exam to a culminating activity that ties together multiple (perhaps all?) strands from a course. This could allow for some creativity and eliminate the time crunch of an exam. It could give the teacher a sense of how much students have grown over the course.

Up until a week ago I thought that exams were crucial but as I've thought and talked about it over the course of the week I think I would be comfortable without one. Here are some of the concerns that I've heard about eliminating exams and my questions about those concerns.

What more can you learn about a student's learning in an hour and a half to two hour exam? One of the options we discussed is the possibility of having targeted exams so that students could show you that they have improved on a topic that they didn't master in the term. This could be as complicated as individualized exams (which would be a lot of work for the teacher) or as simple as giving everyone the same exam and giving each student a list of questions that they must complete on that exam. The goal would be for students to show the teacher that they have gained the understanding that they lacked earlier in the semester. If you decided to go this route you'd have to be a little careful with the weighting. In Ontario 70% of a student's grade is to come from their term work while the remaining 30% comes from a combination of exam and/or summative activity. You wouldn't want 30% of a student's mark to be determined by questions that they struggled with early on. But with a little tweaking I think this could be an effective approach.

Another option we discussed was to make the 'exam' a reflection for the student. It might be a written exam or perhaps in the form of an interview as described here by Alex Overvijk. A reflection might involve questions such as: What was the most useful topic we covered? What was the most difficult? How do you see using any of the ideas from the course beyond the course? I'm sure there are a lot more, many that would be much better than these but the idea would be to get students thinking and reflecting about their learning.

One final option that we discussed (I'm sure there are many others) was moving away from an exam to a culminating activity that ties together multiple (perhaps all?) strands from a course. This could allow for some creativity and eliminate the time crunch of an exam. It could give the teacher a sense of how much students have grown over the course.

Up until a week ago I thought that exams were crucial but as I've thought and talked about it over the course of the week I think I would be comfortable without one. Here are some of the concerns that I've heard about eliminating exams and my questions about those concerns.

- Students need to know how to write exams for post-secondary. This may be true but do we need to subject all students to exams. I know that many college courses don't have exams and it appears that some universities are giving far fewer exams. At my school fewer than 20% of students go off to university. Does it make sense to subject every student to learning how to write exams when so few of them actually will? Perhaps exams could be part of the courses for university bound students.

- Exams provide a check to see if students still know the material or to make sure they really understand it. If a student was able to cram for a test without really knowing what was going on, isn't it possible they could do the same for an exam? How much of the material from an exam is retained by students a month after the fact?

What are your thoughts about final exams? Are they necessary? Should we be eliminating them? Or should we be looking for a more effective model for exams?

Monday, January 19, 2015

EQAO Reflection

Our grade nine students wrote their Grade 9 Assessment of Mathematics (EQAO) last week. Often during this time I reflect on the process, because really what else are you going to do for two hours while supervising. This year my thinking wasn't about the pros or cons about the test but rather the way we evaluate it. The test is sent off to be marked provincially but before that happens schools have the option to evaluate it in order to include some or all of the mark as part of the student's final grade. The thinking here is that if it counts for something then perhaps students will take it seriously. At my school we count the test for 10% of a student's final grade. Then about a week later they will write the final exam that counts for 20% of their grade.

The test consists of two booklets that each must be completed in an hour. Each booklet is made up of 7 multiple choice questions, followed by four longer 'open response' questions then finishes with 7 more multiple choice questions. Once the second booklet is completed students are asked to complete a questionnaire.

My observation has been that more often than not students come into the test under prepared and it serves as a bit of a wake up call to them. They then (hopefully) use the remaining classes to prepare for the final exam.

This year I have decided that I am not happy with counting the test for any portion of the students' final marks. In fact, my students did so poorly that after the fact I told them that I was not going to count it at all towards their mark and here's why:

1. Time

Many students did not have time to complete the test. They had an hour to complete each of the two booklets. For the second year in a row my strongest students did not complete the booklets on the first day. These students were very concerned about the impact it was going to have on their overall grade. Rather than providing incentive to do well it caused a great deal of anxiety. As a math teacher my goal is to help students reduce their anxiety towards math not contribute to it. I also try to evaluate what a student knows and does not know. If a question is left blank I have no idea if it was because the student ran out of time or because they did not know how to do it. By removing time from the equation I can make a better judgement of what the student know.

2. Multiple Choice

I have decided that I disagree with the multiple choice questions. They obstruct my view of what the student does or does not know. Some students will get the correct answer by guessing. Others will get the incorrect answer by guessing. In either case, I am unable to see the process that allowed them to arrive at their answer and as a result I am unable make a true judgement of their understanding of the material.

3. Feedback

I don't know much about the official feedback students get so if I'm wrong here let me know. I believe that tests get marked in the summer (the rest of the cohort will write in June) and a mark is returned to the students in the fall. This is far from immediate feedback and is anything but descriptive. Not very useful in my mind. As a teacher I can mark the work, but I'm not allowed to copy anything. This means that I can't show students where they went wrong. I can tell them that they messed up on the bicycle question but unless they can see where, I'm not sure that's useful.

4. Justification

I'd be hard pressed to justify any mark to a student or a parent given that the tests get sent off, never to be seen again. Students should be able to look at their marked work and question my judgement, which is sometimes right and sometimes wrong. In fact, I enjoy when students start questioning my evaluation as it often brings out what they truly meant to write or allows me to better understand their misconceptions.

5. Rationale

When students ask why the test has to count for a portion of their grade I struggle to give a valid reason. I typically say something along the lines of "If you're going to spend two days writing it, we may as well give you some credit for it". It's not an answer I'm comfortable with but it's all I have. One of the reasons I'm not comfortable with it is that the vast majority of my students perform much worse on the test than they do on the final exam. We could probably discuss what that says about my teaching, but let's save that for another post. The real reason that we count the test as a portion of a student's grade is that we believe that this will make them take it more seriously, which means they will perform better, which will make the school look better. Given that twelve out of eighteen students in my colleague's class said on the survey at the end that they didn't know if the test was going to count (and yes he did let them know on numerous occasions), I'm not sure that counting it is a good motivator. Besides, is this in the best interest of the student or the school?

I'm curious to know whether counting the test as a portion of a student's grade makes them perform better. What does the data say? Do schools that count the test outperform those that don't? Is this information publicly available? Are there any schools that don't count the test? Or does everyone count the test so that they don't look bad? Is this in the best interest of the students?

What does your school do about EQAO testing in grade nine math?

The test consists of two booklets that each must be completed in an hour. Each booklet is made up of 7 multiple choice questions, followed by four longer 'open response' questions then finishes with 7 more multiple choice questions. Once the second booklet is completed students are asked to complete a questionnaire.

My observation has been that more often than not students come into the test under prepared and it serves as a bit of a wake up call to them. They then (hopefully) use the remaining classes to prepare for the final exam.

This year I have decided that I am not happy with counting the test for any portion of the students' final marks. In fact, my students did so poorly that after the fact I told them that I was not going to count it at all towards their mark and here's why:

1. Time

Many students did not have time to complete the test. They had an hour to complete each of the two booklets. For the second year in a row my strongest students did not complete the booklets on the first day. These students were very concerned about the impact it was going to have on their overall grade. Rather than providing incentive to do well it caused a great deal of anxiety. As a math teacher my goal is to help students reduce their anxiety towards math not contribute to it. I also try to evaluate what a student knows and does not know. If a question is left blank I have no idea if it was because the student ran out of time or because they did not know how to do it. By removing time from the equation I can make a better judgement of what the student know.

2. Multiple Choice

I have decided that I disagree with the multiple choice questions. They obstruct my view of what the student does or does not know. Some students will get the correct answer by guessing. Others will get the incorrect answer by guessing. In either case, I am unable to see the process that allowed them to arrive at their answer and as a result I am unable make a true judgement of their understanding of the material.

3. Feedback

I don't know much about the official feedback students get so if I'm wrong here let me know. I believe that tests get marked in the summer (the rest of the cohort will write in June) and a mark is returned to the students in the fall. This is far from immediate feedback and is anything but descriptive. Not very useful in my mind. As a teacher I can mark the work, but I'm not allowed to copy anything. This means that I can't show students where they went wrong. I can tell them that they messed up on the bicycle question but unless they can see where, I'm not sure that's useful.

4. Justification

I'd be hard pressed to justify any mark to a student or a parent given that the tests get sent off, never to be seen again. Students should be able to look at their marked work and question my judgement, which is sometimes right and sometimes wrong. In fact, I enjoy when students start questioning my evaluation as it often brings out what they truly meant to write or allows me to better understand their misconceptions.

5. Rationale

When students ask why the test has to count for a portion of their grade I struggle to give a valid reason. I typically say something along the lines of "If you're going to spend two days writing it, we may as well give you some credit for it". It's not an answer I'm comfortable with but it's all I have. One of the reasons I'm not comfortable with it is that the vast majority of my students perform much worse on the test than they do on the final exam. We could probably discuss what that says about my teaching, but let's save that for another post. The real reason that we count the test as a portion of a student's grade is that we believe that this will make them take it more seriously, which means they will perform better, which will make the school look better. Given that twelve out of eighteen students in my colleague's class said on the survey at the end that they didn't know if the test was going to count (and yes he did let them know on numerous occasions), I'm not sure that counting it is a good motivator. Besides, is this in the best interest of the student or the school?

I'm curious to know whether counting the test as a portion of a student's grade makes them perform better. What does the data say? Do schools that count the test outperform those that don't? Is this information publicly available? Are there any schools that don't count the test? Or does everyone count the test so that they don't look bad? Is this in the best interest of the students?

What does your school do about EQAO testing in grade nine math?

Tuesday, March 25, 2014

Group Test

Last semester I taught the Grade 12 Advanced Functions course. It seemed that every time a test approached a student would ask if they could write the test as a class. We all had a good laugh then inevitably someone would ask if they could write in groups instead. Needless to say the entire class thought this would be a good idea. I dismissed the idea on a number of occasions explaining how it would be difficult to have a good sense of who knew what in a group. My students, however, were very persistent and would ask every time a test was nearing.

On the second last test of the year (just before the Christmas holidays) a student asked if they could write their test as a group. I jokingly said "Sure" and a student immediately replied "Really?". When I told my students I was just kidding they provided a lot of reasons why such a test would be a good idea, in the hopes of getting me to change my mind. I let them know that I would think about it for a bit and get back to them; possibly a strategy for delivering a delayed "No".

As I thought about it I had a lot of questions about logistics for this possible test. They included:

1. What would such a test look like? Surely it couldn't be a regular test that students worked on in a group.

2. How will the groups be determined? Self-assigned? Teacher assigned?

3. How many students should be in a group?

4. What happens if some group members aren't pulling their weight?

5. Do students hand in one test each or one test as a group? Do they get the same mark or different marks?

6. Is this a bad way to prepare students for University?

Some of these questions and their possible solutions occupied my thoughts for several days before I had the courage to go ahead with it. I figured that if things didn't work out I could always call it a test review and give a traditional test afterwards.

Here are the answers that I came up with to the above questions.

1. The test should be less knowledge based (although there were still some knowledge questions) and should be more heavily focused on thinking and problem solving. The knowledge would show up as part of the problem solving.

2. I decided to let students choose their own groups and as it turns out students tended to group themselves by ability level, which is probably how I would have grouped them.

3. I went with three students in a group. I felt that this would allow for some good discussions while not allowing anyone to sit back and do nothing.

4. This is not that different from any other type of group work (assignment, presentation, etc.). The difference is that here I was able to watch to see who contributed what. It would have been possible for me to assign different marks based on the participation, which I didn't do.

5. Students handed in one test and received the same mark.

6. Perhaps, but it was only one test. Besides, is my goal to prepare students for university or for life beyond university? I would guess that once out of school most of these students will do far more collaborative work than they will test writing. Shouldn't I be preparing them for that as well?

Here are some things that I observed:

- There was no anxiety as students entered the class.

- There were some great discussions happening the entire time

- There was some learning going on during the test. Students who didn't understand didn't just let their group do the work, they were trying to understand it.

- There were no questions that were left blank.

- Students seemed to be enjoying the test.

- Students reported that the time just flew by.

- We had a modified schedule the day of the test. Our class was shorter than normal but I told the class that they were welcome to stay into lunch if they wanted to. Most stayed for the period and most of lunch. I was amazed that nobody just wanted to leave.

Here are a few comments that I heard during the test:

- After some discussion with the group..."I think I understand this now"

- S1:"That works!" S2: "Yeah it does." S3: "We've got it!"

- "YES! That's it."

- "I love this test. It's great to communicate."

- A student to me: "Can you tell me...?" Me:

S:"Maybe I'll ask my group."

The test was a big hit among students. They said afterwards that they felt less stressed, they really enjoyed bouncing ideas off one another and wished that all tests could be done in the same way. From my point of view it was a great experience as well. Students were totally immersed in the work, there were lots of great discussions and the atmosphere in the class was very pleasant. It almost felt like a coffee shop, a productive coffee shop.

How did the students do? I would say that they performed at about the same level they normally would despite the test being more challenging than a typical test I would give. My hope is that by the end of the test they came away knowing more than had they written a regular test. I didn't measure this but I suppose a regular test after the fact might have provided some insight.

This is certainly something that I will try again.

Saturday, April 24, 2010

Back-Channel Chat During Test

About a week and a half ago I read Royan's post about Test Taking with the Backchannel. My first reaction was "Is this guy crazy? You can't do that on a test". As I read the post I started to think that it was good for Royan to try this but it's not something I would ever do. By the end of the week I was planning how I could incorporate a back-channel for one of my tests. I just kept thinking about the amount of information that I would be able to gather from my students based on the questions they asked and the answers they gave (assessment for learning). It seemed like the right thing to do. Coincidentally, a few days before the test, one of my students asked if we (as a class) could do the test together. She was joking but she was very pleased to hear about the chat.

Here are the logistics. Not everyone in my class has a hand-held device and the school doesn't have wireless. Using the back-channel in my room was out of the question. I booked a computer lab and we wrote the test in there. Not ideal but it worked. I thought about using Twitter and had we done the test in the classroom that's probably what we would have done. Since we were at the computers I created a Moodle chat within our course and that's what we used for the back-channel.

The day before the test I informed my class that they would be able to (and were encouraged to) use the chat for their test. We modelled asking good questions and providing good answers (guiding but not giving the answer).

My predictions of how the test would go, which are probably no surprise:

a) My top students would do a lot of question answering and may ask the occasional small question.

b) The middle of the class would ask lots of questions and occasionally answer a question or two.

c) The bottom of my class wouldn't contribute much to the chat.

There were a couple of surprises. The first was one of my top students who had good conceptual understanding but couldn't quite put the nuts and bolts together. Not only did she provide some great help to get students started but she also answered in a way that was guiding but not too helpful. In fact, overall I would stay this was true of most answers given.

The second surprise I had was that some of my mid-mark students provided much more assistance than I had anticipated. Again the help was good help. I was very pleased.

It's unfortunate that prediction c) was in fact bang on. Unfortunate because those students are the ones that have the most to gain from this type of test. I would hope that if we did this enough they would start to see the value and buy in.

Would I do this again? Definitely. In fact, I think I would try to have all applied level tests this way. The students in this class are the types of students that don't spend a lot of time thinking about the questions. When doing a test on their own, if they don't know how to start they give up. This doesn't allow me to see what they really know. If they can get a hand starting, at least I can see how much they actually know instead of seeing a blank answer. Don't get me wrong. I probably wouldn't use this strategy for all classes. I'm not sure how I would feel about using a back-channel in a grade 12 university bound class. I'm not sure how cooperative students would be given the competition for scholarships and entrance to university.

I would love to hear your comments.

Here are the logistics. Not everyone in my class has a hand-held device and the school doesn't have wireless. Using the back-channel in my room was out of the question. I booked a computer lab and we wrote the test in there. Not ideal but it worked. I thought about using Twitter and had we done the test in the classroom that's probably what we would have done. Since we were at the computers I created a Moodle chat within our course and that's what we used for the back-channel.

The day before the test I informed my class that they would be able to (and were encouraged to) use the chat for their test. We modelled asking good questions and providing good answers (guiding but not giving the answer).

My predictions of how the test would go, which are probably no surprise:

a) My top students would do a lot of question answering and may ask the occasional small question.

b) The middle of the class would ask lots of questions and occasionally answer a question or two.

c) The bottom of my class wouldn't contribute much to the chat.

There were a couple of surprises. The first was one of my top students who had good conceptual understanding but couldn't quite put the nuts and bolts together. Not only did she provide some great help to get students started but she also answered in a way that was guiding but not too helpful. In fact, overall I would stay this was true of most answers given.

The second surprise I had was that some of my mid-mark students provided much more assistance than I had anticipated. Again the help was good help. I was very pleased.

It's unfortunate that prediction c) was in fact bang on. Unfortunate because those students are the ones that have the most to gain from this type of test. I would hope that if we did this enough they would start to see the value and buy in.

Would I do this again? Definitely. In fact, I think I would try to have all applied level tests this way. The students in this class are the types of students that don't spend a lot of time thinking about the questions. When doing a test on their own, if they don't know how to start they give up. This doesn't allow me to see what they really know. If they can get a hand starting, at least I can see how much they actually know instead of seeing a blank answer. Don't get me wrong. I probably wouldn't use this strategy for all classes. I'm not sure how I would feel about using a back-channel in a grade 12 university bound class. I'm not sure how cooperative students would be given the competition for scholarships and entrance to university.

I would love to hear your comments.

Subscribe to:

Comments (Atom)